SwarmVault

A local-first knowledge compiler, built on the LLM Wiki pattern. Most "chat with your docs" tools answer a question and throw away the work. SwarmVault keeps a durable wiki between you and raw sources — the LLM does the bookkeeping, you do the thinking.

Three-Layer Architecture

SwarmVault uses three layers, following the pattern described by Andrej Karpathy:

- Raw sources (

raw/) — your curated collection of source documents. These are immutable: SwarmVault reads from them but never modifies them. - The wiki (

wiki/) — LLM-generated and human-authored markdown. Source summaries, entity pages, concept pages, cross-references, dashboards, and outputs. The wiki is the persistent, compounding artifact. - The schema (

swarmvault.schema.md) — defines how the wiki is structured, what conventions to follow, and what matters in your domain.

In the tradition of Vannevar Bush's Memex (1945) — a personal knowledge store with associative trails between documents — SwarmVault treats the connections between sources as valuable as the sources themselves.

Use it for personal knowledge management, research deep-dives, book companions, code documentation, business intelligence, or any domain where you accumulate knowledge over time. You can also start with just the standalone schema template — zero install, any LLM agent.

No provider setup is required to begin. The built-in heuristic path works locally and offline; external model providers are optional upgrades for richer synthesis and capability-specific workflows.

Code files always stay local: SwarmVault parses them on your machine via tree-sitter. Configured providers are used only for non-code text and image workflows that actually need them.

Install

npm install -g @swarmvaultai/cliRequires Node >=24. See Installation for details.

What a Vault Looks Like

my-vault/

├── swarmvault.schema.md user-editable vault instructions

├── raw/ immutable source files and localized assets

├── wiki/ compiled wiki: sources, concepts, entities, code, outputs, graph, context, tasks

├── state/ graph.json, retrieval/, context packs, task records, embeddings, sessions, approvals

├── .obsidian/ optional Obsidian workspace config

└── agent/ generated agent-facing helpersInput Types

SwarmVault can mix documents, research URLs, transcripts, media, data exports, browser clips, and code in one vault. Start with the quickstart, then use the input type reference when you need the full supported-format matrix.

What You Get

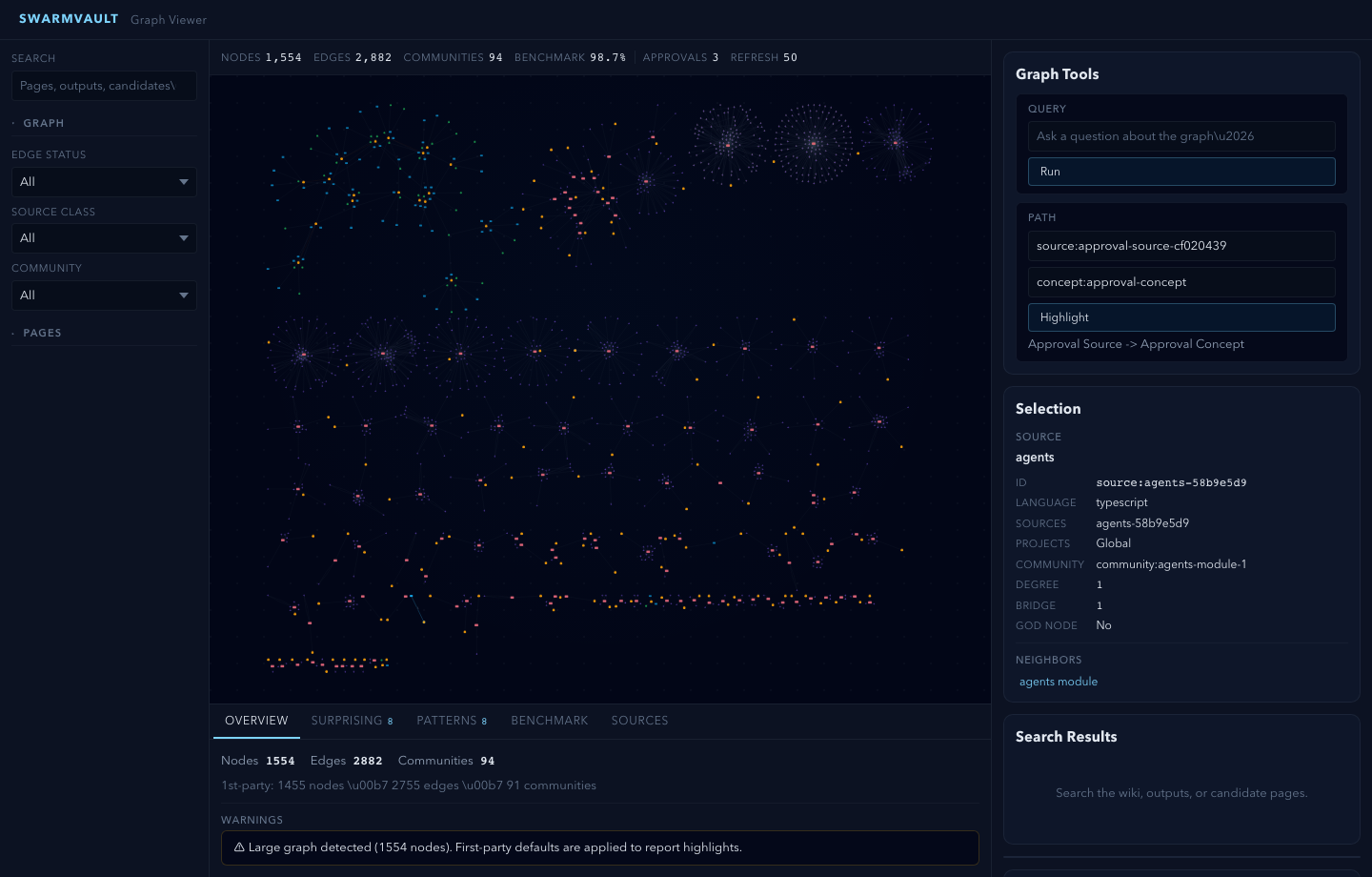

Knowledge graph with provenance - every edge traces back to a specific source and claim. Nodes carry freshness, confidence, and community membership.

God nodes and communities - highest-connectivity bridge nodes identified automatically. Graph report pages surface surprising connections with plain-English explanations.

Schema-guided compilation - each vault carries swarmvault.schema.md so the compiler follows domain-specific naming rules, categories, and grounding requirements.

Save-first queries and chat sessions - answers write to wiki/outputs/ by default, while swarmvault chat persists multi-turn transcripts under wiki/outputs/chat-sessions/ with structured state in state/chat-sessions/. Query and chat support markdown, report, slides, chart, and image output formats.

Agent context packs - swarmvault context build assembles a bounded pack of pages, graph evidence, citations, and explicit omissions for an implementation goal, PR review, or handoff. Packs are saved in state/context-packs/ with markdown companions in wiki/context/.

Static AI export packs - swarmvault export ai writes llms.txt, full text, JSON-LD graph data, manifest metadata, and per-page siblings so another tool can consume the compiled wiki without starting a server.

Agent task ledger - swarmvault task start|update|finish|resume records task goals, decisions, graph evidence, linked context packs, changed paths, outcomes, and follow-ups as JSON plus markdown under state/memory/tasks/ and wiki/memory/tasks/. Existing memory commands remain compatibility aliases.

Retrieval, hybrid search, and rerank - full-text retrieval stays the baseline under state/retrieval/, but an embedding-capable provider can fuse semantic page hits into query context. Set retrieval.rerank: true when you want the query provider to rerank the merged hits before answer generation.

Reviewable changes - compile --approve stages changes into approval bundles. New concepts and entities land in wiki/candidates/ first. Nothing mutates silently.

Token-budgeted compile and git-aware commits - compile --max-tokens <n> trims lower-priority generated pages when the vault needs to stay inside a bounded context window, and ingest, compile, or query can auto-commit wiki/ plus state/ changes with --commit.

Guided sessions - ingest --guide, source add --guide, source reload --guide, source guide <id>, and source session <id> generate resumable source sessions, source reviews, and integration-oriented source guide pages before you accept canonical updates. Set profile.guidedIngestDefault when guided mode should be the default for ingest/source commands, and use --no-guide for one-off light runs. profile.guidedSessionMode decides whether those approval-bundled updates target canonical pages or stay in wiki/insights/.

Knowledge dashboards - wiki/dashboards/ gives you recent sources, a reading log, a timeline, source sessions, source guides, a research map, contradictions, and open questions. They work as plain markdown first, and profile.dataviewBlocks can append Dataview blocks for a more Obsidian-native view.

Graph report health signals - graph report artifacts include community cohesion summaries, isolated-node and ambiguity warnings, and stronger follow-up questions when the graph has weak or ambiguous regions.

Visual + post-ready share kit - every compile writes wiki/graph/share-card.md, wiki/graph/share-card.svg, and wiki/graph/share-kit/; swarmvault graph share --post prints concise text, swarmvault graph share --svg [path] writes a 1200x630 visual card, and swarmvault graph share --bundle [dir] writes a portable folder for posting, linking, or screenshotting.

Deep lint defaults - set profile.deepLintDefault when swarmvault lint should include the advisory deep-lint pass by default. Use --no-deep for one-off structural-only runs.

Configurable profiles - the profile block in swarmvault.config.json lets you compose presets, dashboardPack, guidedSessionMode, guidedIngestDefault, deepLintDefault, and dataviewBlocks instead of waiting for a separate hardcoded product mode for every use case.

Optional model providers - OpenAI, Anthropic, Gemini, Ollama, OpenRouter, Groq, Together, xAI, Cerebras, generic OpenAI-compatible, and custom adapters. Or stay fully local/offline with the built-in heuristic.

Agent integrations - explicitly install rules for Codex, Claude Code, Cursor, Goose, Pi, Gemini CLI, OpenCode, Aider, GitHub Copilot CLI, Trae, Claw/OpenClaw, Droid, Kiro, Hermes, Google Antigravity, VS Code Copilot Chat, and the extended skill-bundle roster. init, quickstart, scan, and clone leave project-local rule files alone unless you opt into configured installs. Optional graph-first hooks bias supported agents toward the wiki before broad search.

MCP server - swarmvault mcp exposes the vault to any compatible agent client over stdio, including context-pack, task, memory compatibility, graph clustering, and retrieval health tools. The repository also ships Docker/registry metadata for stdio container validation.

Automation - watch mode, git hooks, recurring schedules, and inbox import keep the vault current without manual intervention. Repo-aware watch now takes a faster code-only path when tracked changes stay inside code files.

Blast radius, graph status, validation, query filters, tree, merge, clustering, and graph reports - graph blast <target> traces reverse-import impact for code changes, graph status [path] checks graph freshness without writing watch artifacts, and check-update [path] is the top-level compatibility alias for the same read-only check. graph stats prints lightweight counts and relation mix, graph validate [graph] --strict checks graph artifact integrity before export/merge/push workflows, graph update [path] blocks unexpected node/edge shrinkage unless --force is explicit, and update [path] is the top-level compatibility alias. watch [path] --once targets one repo root without persisting watch config. graph query filters traversal by relation, context group, evidence class, node type, or language, graph tree / tree writes an interactive source/module/symbol HTML tree with a node inspector, graph merge / merge-graphs combines SwarmVault or node-link JSON graph artifacts, graph cluster [--resolution <n>] recomputes communities and report artifacts without re-ingesting, and cluster-only [vault] is the top-level compatibility alias. graph export --report writes a lightweight self-contained HTML report when you want a shareable graph status snapshot instead of the full interactive viewer; graph export --neo4j <path> is an alias for the Cypher export used before Neo4j imports.

Graph diff and Obsidian export - swarmvault diff compares the current graph against the last committed baseline, while graph export --obsidian writes a note bundle that preserves wiki folders, graph connections, community notes, orphan stubs, copied assets, and a minimal .obsidian config.

Adaptive graph communities - community detection auto-tunes Louvain resolution for small and sparse graphs, then splits oversized or low-cohesion communities so large-repo reports stay scannable. graph.communityResolution or one-off graph cluster --resolution <n> lets you pin a specific value when you want deterministic clustering.

Worktree artifact roots - SWARMVAULT_OUT=<dir> keeps swarmvault.config.json and swarmvault.schema.md in the project root while writing generated raw/, wiki/, state/, agent/, and inbox/ artifacts under the output root.

Built-in browser clipper - graph serve prints a bookmarklet URL that can ingest the current browser page into the running vault without leaving the page. The viewer workbench also exposes explicit capture modes, safe vault doctor repair, copyable suggested commands, and budgeted context-pack/task actions.

Human export ingest - transcript files, Slack exports, mailbox files, and calendar exports now flow through the same manifest, compile, search, and review pipeline as books, notes, and research captures.

Interactive ingest feedback - file and directory ingest emit bounded progress on stderr with the active file and processed content size, while JSON, MCP, watch, and CI-style flows stay quiet.

Large-repo hardening - big repo ingests and compile passes stay bounded, long provider-backed non-code analysis is chunked before model calls, nested .gitignore / .swarmvaultignore files are respected with .swarmvaultinclude allowlists, parser compatibility failures stay local to the affected sources with explicit diagnostics, and graph reports roll up tiny fragmented communities for readability.

Every edge is tagged extracted, inferred, or ambiguous - you always know what was found vs guessed.

Core Workflow

- Ingest a file path, directory, or URL into immutable source storage

- Shape the vault with

swarmvault.schema.md - Compile source manifests into wiki pages, graph data, and local search

- Query or explore the compiled vault and save useful answers back into

wiki/outputs/ - Review, remember, lint, and automate with approval bundles, candidate queues, task ledgers, deep lint,

inbox import,watch, and MCP - Schedule recurring work with

swarmvault schedule

Quick Start

swarmvault quickstart ./apps/api

swarmvault query "What are the key concepts?"

swarmvault doctor

swarmvault graph serve

For more control, use swarmvault init, swarmvault ingest, swarmvault add, and swarmvault compile directly. swarmvault init --profile accepts default, personal-research, or a comma-separated preset list such as reader,timeline. For fully custom behavior, edit the profile block in swarmvault.config.json and keep swarmvault.schema.md as the human-written intent layer.

Worked Examples

If you want a concrete walkthrough instead of a command summary, start with the worked examples. They mirror the example vault inputs and release-validation flows used by the project itself.

Why SwarmVault

Most "chat with your docs" tools answer a question and discard the work. SwarmVault keeps the work. Source manifests, layered schemas, markdown pages, approval bundles, query outputs, graph edges, and freshness state all remain as inspectable files you can diff, search, and reuse.